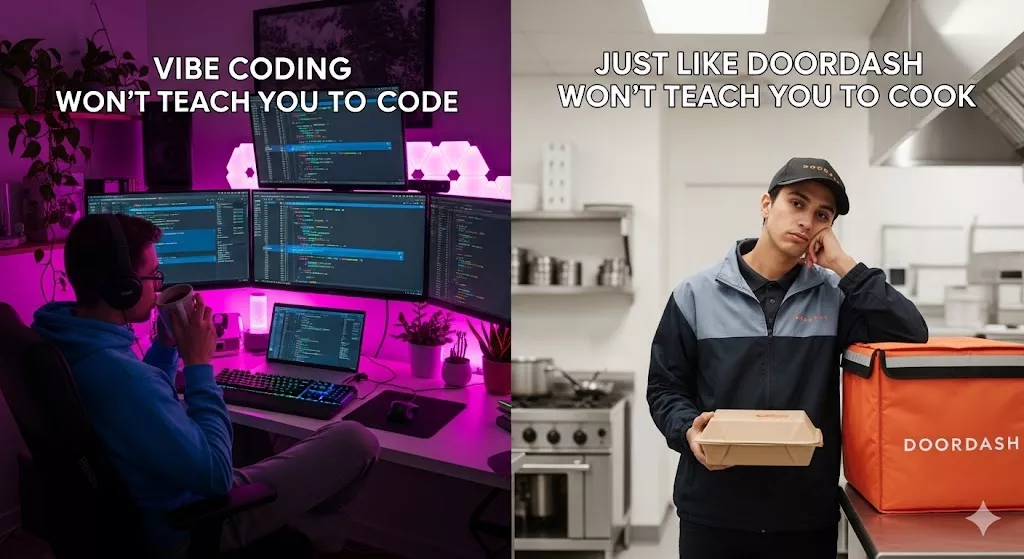

Vibe Coding Won’t Teach You to Code — Just Like DoorDash Won’t Teach You to Cook

There’s a comforting lie we tell ourselves when we use a shortcut long enough: eventually, I’ll absorb the skill by osmosis. Order enough pad thai through Glovo and surely, one day, you’ll just know how to balance fish sauce and tamarind. Right?

Of course not. And yet, this is exactly the bargain a growing number of aspiring developers are making with vibe coding — the practice of describing what you want to an AI and letting it generate the code for you. Ship fast, learn later. Except “later” never comes.

What Vibe Coding Actually Is

The term, popularized in early 2025, describes a workflow where you treat an LLM as your entire engineering department. You prompt, it codes. You paste errors back in, it fixes them. You never read the code — you just vibe with the output. If the app works, you ship it. If it doesn’t, you prompt again.

And honestly? It’s incredible for prototyping. For getting a quick landing page up, or wiring together an internal tool you’ll use for a week. Nobody needs to deeply understand CSS grid to center a div once.

The problem starts when people mistake this workflow for learning.

The DoorDash Delusion

Think about what happens when you order food through a delivery app a thousand times. You develop opinions. You know which restaurants are reliable. You learn that the burrito place on 5th Street wraps things too loosely for delivery. You can navigate the app blindfolded.

But you cannot cook.

You haven’t learned what happens to garlic at different temperatures. You don’t know why your rice is mushy or why the sauce broke. You’ve built up a sophisticated consumption skill — you’re an expert eater — but you’ve developed zero production skill.

Vibe coding creates the same gap. After hundreds of prompts, you’ll get very good at describing software. You’ll learn which phrasings get better outputs. You’ll develop intuition for when the AI is hallucinating. You’ll become an expert consumer of code.

But you still won’t understand why your app crashes under load, why that database query takes eleven seconds, or why the authentication flow has a gaping security hole. You’ve become an expert at ordering — not at building.

The Illusion of Fluency

There’s a well-studied phenomenon in education called the illusion of fluency. When you watch someone perform a skill effortlessly — a chef julienning carrots, a pianist playing Chopin, an AI writing a React component — your brain registers understanding. You feel like you get it because you can follow along.

But following along and doing are fundamentally different cognitive acts. Reading code that an AI generates and understanding why it made those choices are worlds apart. The AI doesn’t explain that it chose a hash map for O(1) lookups, or that it used a debounce to prevent API flooding. It just does it. And you just vibe.

This is why someone with six months of vibe coding under their belt can feel extremely productive and yet freeze completely the moment something breaks in a way the AI can’t fix — which, if you’ve shipped anything real, you know happens constantly.

What Cooking Actually Teaches You

When you learn to cook — really learn, not just follow a recipe once — you develop a mental model of how ingredients interact. You understand principles: acid balances fat, salt enhances flavor, high heat creates browning through the Maillard reaction. These principles transfer across every dish you’ll ever make.

Learning to code works the same way. When you actually wrestle with a sorting algorithm, you’re not just memorizing steps — you’re building intuition about time complexity, about trade-offs between memory and speed, about why certain data structures exist. When you manually implement authentication, you don’t just get a login screen — you understand tokens, session management, and attack surfaces.

These mental models are what separate someone who can build reliable software from someone who can prompt an AI into producing something that looks like reliable software.

The Debugging Cliff

Here’s where the analogy sharpens. Imagine your DoorDash order arrives and the food is wrong. You can complain, get a refund, reorder. The feedback loop is: describe problem → someone else fixes it.

Now imagine you’re in a kitchen and the sauce breaks. If you’re a cook, you know why — too much heat, added the dairy too fast, didn’t temper properly. You fix it. If you’re a DoorDash power user standing in a kitchen for the first time, you’re googling “sauce weird texture help” and hoping for the best.

In software, this is the debugging cliff. Vibe coders hit it hard and hit it often. When the AI-generated code breaks in production, they have two options: paste the error back and pray, or stare at code they fundamentally don’t understand. There’s no third option, because they never built the mental models that would let them reason about what went wrong.

And the scariest bugs — the security vulnerabilities, the race conditions, the subtle data corruption — don’t announce themselves with error messages. They require the kind of deep understanding that only comes from having written (and broken) things yourself.

“But I Can Always Just Ask the AI”

This is the most common pushback, and it deserves a direct response.

Yes, you can always ask the AI. Just like you can always order delivery. But consider three things:

First, you can’t evaluate what you don’t understand. If you ask an AI to fix a security vulnerability and it generates a response, how do you know it actually fixed it? How do you know it didn’t introduce a new one? Evaluating AI output requires the very knowledge that vibe coding skips.

Second, AI tools will get better, but so will the problems. As software eats more of the world, the systems we build grow more complex. AI will handle the easy parts — it already does. What remains for humans is the hard stuff: the architecture decisions, the trade-off analysis, the debugging of systems too large for any context window. Those skills only come from understanding fundamentals.

Third, and most practically: every company that hires developers will eventually figure out that vibe coders and real engineers are not the same thing. The interview might change form, but the signal employers look for — can this person reason about software? — won’t go away just because prompting exists.

The Middle Path

None of this means AI coding tools are bad. They’re extraordinary. A skilled developer using AI is dramatically more productive than a skilled developer without it. The key word is skilled.

The ideal path looks something like this: learn the fundamentals first, build the mental models, understand why before how — and then use AI to amplify that understanding. Use it to handle boilerplate. Use it to explore unfamiliar frameworks faster. Use it to rubber-duck your architecture decisions.

This is the difference between a chef who uses a food processor and someone who’s never picked up a knife. The tool makes the expert faster. It doesn’t make the novice an expert.

The Real Cost

The real cost of vibe coding as a learning strategy isn’t that you build bad software today — honestly, you might build something passable. The real cost is opportunity cost. Every hour spent prompting without understanding is an hour you could have spent building the foundation that makes you genuinely capable.

Six months of vibe coding gives you six months of prompts. Six months of actually learning to code gives you a skillset that compounds for the rest of your career, with or without AI.

The food delivery apps aren’t going anywhere. Neither are AI coding tools. But if you want to actually cook — if you want to build things you understand, debug problems you can reason about, and have a skill that doesn’t evaporate the moment the tool changes — you have to put in the work that no shortcut can replace.

Stop vibing. Start building.