The human did the engineering. The AI did the typing.

A three-hour debugging session, a 5× speedup, and why your problem-solving skills matter more in the AI era — not less.

Yesterday Claude Code and I spent three hours optimizing a hot loop in Rheon.xyz, the DEX aggregator I’m building. The final diff was about 80 lines. We went from 7 seconds to 1.4 seconds per query.

Great outcome. But the more interesting story is where the ideas came from.

Because at AlgoCademy we’ve been arguing for months that the old pitch — “learn to code, syntax is the hard part” — is dead. AI writes syntax now. The actually-valuable skill is the thinking above the syntax: algorithmic intuition, the instinct to smell a wrong result, knowing your toolkit deeply enough to reach for the right trick at the right moment.

Yesterday’s session was a textbook case study. Let me walk you through it.

The setup

A function in the solver (written in Rust) was getting called 100,000 times per user query. Each call walked a list of 400–700 items in a linear loop. The whole thing took 7 seconds. Too slow for anything user-facing.

Classic optimization problem. The kind of thing you’d see in a coding interview — except it was running on real user money.

The wrong first instinct (Claude’s)

Claude’s opening move was: “Let’s sample the input space more aggressively.” We already had an approximation scheme using 18 buckets; Claude wanted to try 36. I let it run the experiment.

Result: basically a wash. Some trades faster, some slower, and the cost of generating the extra data tripled our indexing pipeline spend.

Without a human in the loop, we would have shipped a change that made things marginally worse while costing more money. Not because Claude is dumb — because it was pattern-matching to a local optimum without questioning the frame.

The right idea (mine)

“Can’t we just precompute prefix sums and binary-search them?”

If you’ve done any competitive programming, you recognize this immediately. It’s the Fenwick tree / BIT pattern, dressed down. The math inside each range was closed-form; only the boundaries needed to be accumulated. Precompute once per pool, binary-search at query time. An O(N) walk becomes an O(log N) lookup.

Claude implemented it cleanly. Accuracy was bit-for-bit perfect.

The speedup was 5%.

On a path where the theory said 10–50×.

“Something is fishy”

This is the moment that decided whether the day ended in a success or a half-broken PR.

Claude started generating plausible-sounding explanations: “maybe the arrays aren’t big enough for log N to help, maybe there are cache misses, maybe partition_point has overhead.” This is the kind of senior-sounding hand-waving that, if you accept it, gets you to shipping.

I didn’t accept it. “200 elements is 1.6KB. That fits in L1 cache. Cache misses don’t explain this.”

We ran one experiment that took five minutes: replace the function body with return constant. If the lookup was really the bottleneck, the program would get dramatically faster. It didn’t budge.

Which meant the slow part was somewhere else entirely.

Claude finally went and looked at how the data structure was being used. The object holding the lookup tables was being cloned ~50 times per solve, and each clone was deep-copying four 200-element vectors. The allocator was the bottleneck — not the lookup itself.

One-line fix: wrap the tables in Arc<>, so clones become a refcount bump instead of four heap allocations.

Speedup: 40%.

Not yet at the floor

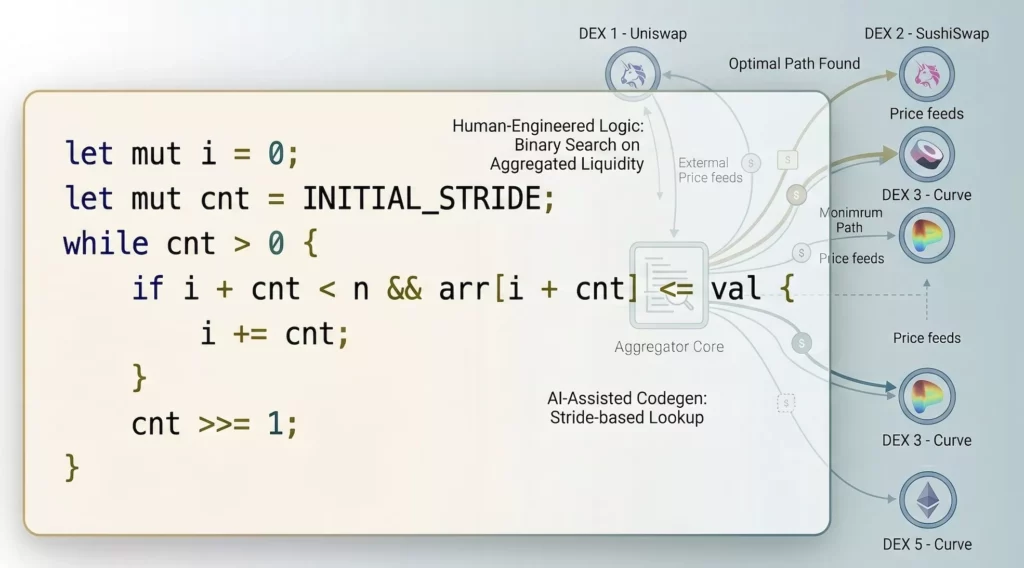

I could have stopped there. Deadline met. But theory still said we should be faster. I proposed a branchless binary search — the kind of snippet competitive programmers memorize:

let mut i = 0;

let mut cnt = INITIAL_STRIDE;

while cnt > 0 {

if i + cnt < n && arr[i + cnt] <= val {

i += cnt;

}

cnt >>= 1;

}

Branchless because the inner body is one compare and one conditional move. CPU branch predictors eat it for breakfast; the access pattern is fixed-stride; the loop count is log₂ of a known number.

Claude wrote it. 10× faster than stdlib’s partition_point. Most of the way to the theoretical floor, in one change. But theory said there was still a bit more headroom.

The unlock

“What if INITIAL_STRIDE is a compile-time constant?”

Claude’s first answer was that it wouldn’t matter much. I asked again, more directly. Then it clicked: when INITIAL_STRIDE is const, LLVM can prove the loop runs exactly log₂(STRIDE) + 1 iterations. It fully unrolls into straight-line code — ~10 inline cmp + cmov sequences, no loop counter, no back-edge branch, not even the shift instruction. Modern CPUs schedule those cmovs to execute in parallel.

Which is roughly a 2× win on top of the branchless version — exactly what you’d predict from removing the stride/counter/branch overhead.

Final speedup on the lookup function: 18× over the original linear walk. Total query time drops from 7 seconds to 1.4 — lookup work is now essentially free, and what’s left is genuinely elsewhere in the solver.

One last catch

Claude wrote const INITIAL_STRIDE: usize = 1 << 10 — 1024. I asked: “our biggest pool has 670 ticks. Won’t the first iteration of a 1024 stride always do nothing, because i + 1024 is always out of bounds?”

Right. The maximum reachable index from stride S is 2S − 1, so 1 << 9 = 512 covers arrays up to 1023 entries — handles our 670 without wasting an iteration. One character changed. Tiny win.

Now the real point

Let me count where the wins came from:

- Recognizing the prefix-sum pattern — competitive programming fundamentals.

- Knowing that 5% speedup when theory says 50× means something is wrong — calibrated intuition for performance work.

- Smelling “cache miss” as a hand-wave rather than an explanation — having debugged real systems before.

- Proposing branchless binary search — knowing the toolkit.

- Recognizing that compile-time constants unlock LLVM optimizations — knowing the compiler.

- Catching the

1 << 10vs1 << 9off-by-one — reading carefully, thinking about edge cases.

Zero of these came from the AI.

Every one of them is the kind of thing you build through years of solving problems — or, more efficiently, through deliberate practice on the patterns that actually show up in real systems.

Claude wrote beautiful code, fast. Claude was also wrong twice, hand-waved once, and shipped a small bug I caught on re-read. Without a human who’d thought about prefix sums, cache behavior, and branch prediction, we’d have shipped a 5% improvement, called it done, and moved on.

This is what AI pair programming looks like when it actually works. Not “AI does the work, human reviews.” More like: AI executes fast, human drives the direction. The better the AI gets, the more leverage your engineering taste provides.

When I hire, I don’t care that you can write a for loop. AI can write a for loop. I care that when the benchmark comes back 5% faster instead of 50×, you stop and ask why. I care that you recognize a prefix-sum problem before reaching for brute force. I care that you know what LLVM can and can’t prove about your code.

Syntax is cheap. Judgment compounds.

That’s the skill worth building. That’s what we’re trying to teach at AlgoCademy.

Keep sharpening the judgment — it’s the one thing the AI can’t do for you.